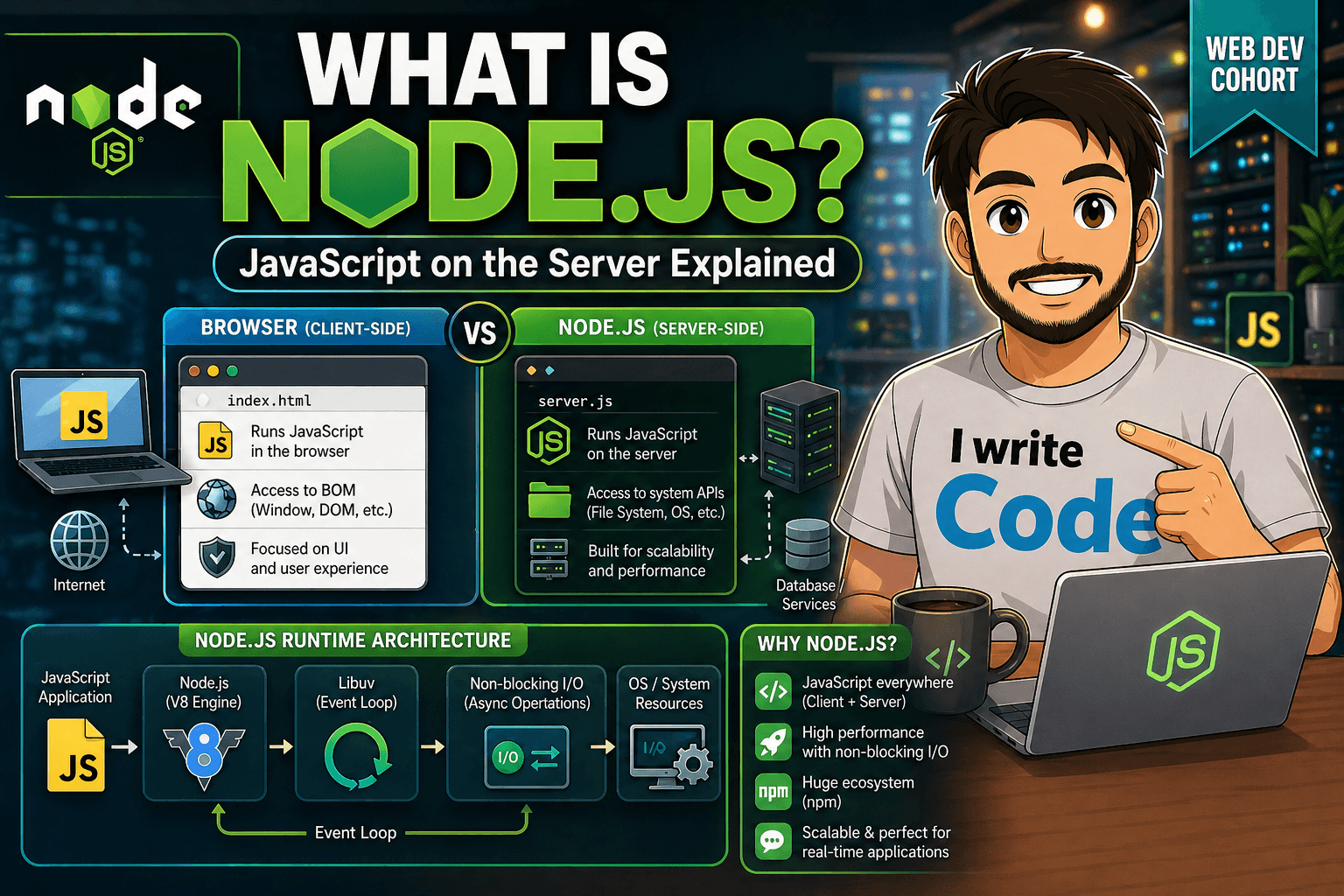

How Node.js Handles Multiple Requests with a Single Thread

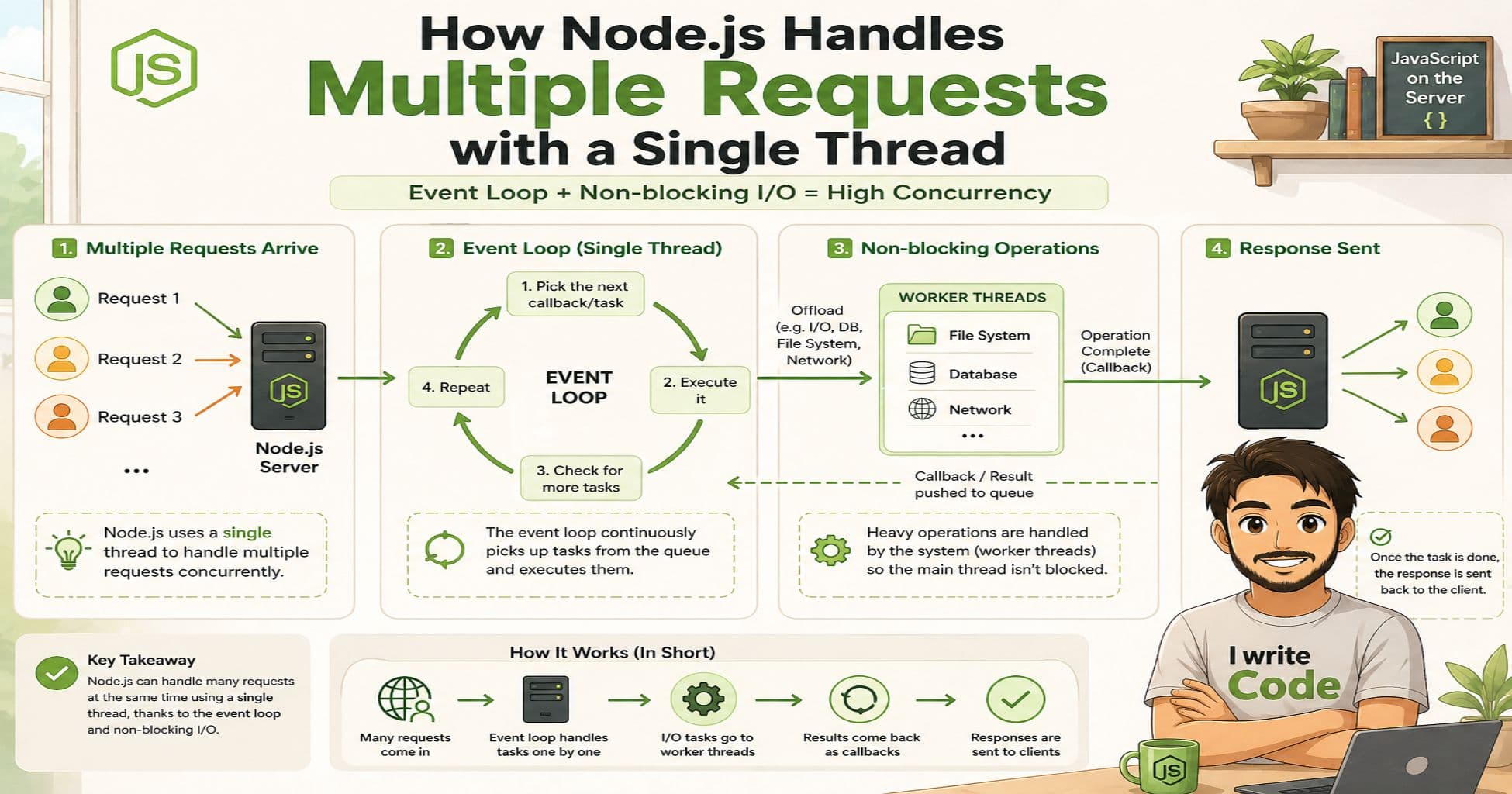

When you first hear that Node.js is “single-threaded,” it sounds like a major limitation. How can a single thread handle thousands of simultaneous users without freezing? The secret lies in Node.js’s clever architecture. Instead of relying on traditional multi-threading, Node.js uses an event-driven model, a non-blocking I/O system, and background task delegation to manage concurrency efficiently. In this guide, we will break down exactly how Node.js handles multiple requests, why the event loop is the heart of its performance, and how this design allows applications to scale effortlessly. Whether you are building a real-time chat app, an API, or a microservice, understanding these concepts will help you write faster, more resilient code.

The Single-Threaded Nature of Node.js

Unlike traditional web servers like Apache or Java-based servers, which spawn a new thread for every incoming request, Node.js runs its main execution logic on a single thread. This might sound restrictive, but it is actually a deliberate design choice. Threads consume significant memory and require constant context switching by the CPU, which can slow down a server under heavy load. By sticking to one main thread, Node.js keeps memory usage remarkably low and avoids the overhead of managing dozens or hundreds of parallel threads.

However, “single-threaded” does not mean “single-tasking” or “blocking.” Node.js is explicitly designed to never stall that main thread. Instead of waiting for a database query, file read, or network call to finish, it registers a callback and immediately moves on to the next task. When the external operation completes, the result is queued for execution. This non-blocking behavior is what makes Node.js feel like it is doing many things at once, even though only one line of your JavaScript code runs at any given moment.

The Event Loop’s Role in Concurrency

The magic behind Node.js concurrency is the event loop. Think of it as a continuous traffic controller that never sleeps. When your application starts, the event loop begins running in the background. Its job is simple but powerful: continuously check if there are any pending callbacks, execute them in order, and repeat.

The event loop operates in distinct phases, each responsible for handling different types of tasks like timers, I/O callbacks, idle checks, and immediate execution. When you make an asynchronous call, like fetching data from an external API or querying a database, Node.js hands that request off to the underlying operating system or background workers. Your main thread immediately continues executing the next lines of code. Once the external operation finishes, the result is placed in a callback queue. The event loop picks it up during the appropriate phase and executes your registered function.

This model allows Node.js to manage thousands of concurrent connections without creating a dedicated thread for each one. As long as your JavaScript callbacks execute quickly and avoid heavy synchronous computations, the event loop stays highly responsive, and your server remains fast under pressure.

Delegating Tasks to Background Workers

While JavaScript itself runs on a single main thread, Node.js is not entirely working alone. Under the hood, it relies on libuv, a powerful C library that handles asynchronous I/O operations across different operating systems. libuv maintains a thread pool (typically four threads by default) to offload tasks that cannot be handled purely by the OS’s non-blocking APIs.

These delegated tasks include file system operations, DNS resolution, compression, and cryptographic functions. When you call fs.readFile() or use the crypto module, Node.js routes the work to the libuv thread pool. The main thread continues running your application logic, and once the background thread finishes, it notifies the event loop. For even heavier JavaScript workloads, Node.js provides the Worker Threads module, which lets you run CPU-intensive JavaScript code in true parallel threads without blocking the main event loop. This layered delegation ensures that long-running tasks do not choke your application’s responsiveness.

Handling Multiple Client Requests

Let’s see how this architecture works in a real-world scenario. Imagine a user with name: "Suprabhat", age: 23, visits your e-commerce platform. At the exact same moment, hundreds of other users are browsing products, adding items to carts, and checking out. In a traditional multi-threaded server, each request might consume a dedicated thread, quickly exhausting memory and CPU resources. In Node.js, the main thread accepts each connection, registers the required asynchronous operations (like database reads or external payment API calls), and immediately returns control to the event loop.

While Suprabhat’s request waits for a database response, Node.js is already processing the next user’s request. When the database finally replies, the event loop queues the callback, executes it, formats the JSON response, and sends it back to Suprabhat’s browser. This cycle repeats continuously. Because I/O operations are handled asynchronously and delegated to the OS or background threads, the main thread spends almost all its time accepting new connections and processing ready callbacks, not idly waiting.

Why Node.js Scales Well

Node.js scales exceptionally well for I/O-bound applications, which cover the vast majority of modern web APIs, real-time dashboards, and microservices. Its low memory footprint means you can run many instances on a single server or distribute them across a containerized cluster. Tools like PM2 or the built-in cluster module allow you to fork the single-threaded process across multiple CPU cores, effectively multiplying your server’s capacity without rewriting your application code.

The event-driven architecture also aligns perfectly with modern cloud infrastructure. Load balancers can distribute incoming TCP/HTTP traffic across multiple Node.js instances, and because each instance handles thousands of concurrent connections efficiently, horizontal scaling becomes straightforward and cost-effective. While Node.js is not ideal for heavy CPU-bound tasks like video encoding or complex mathematical simulations (unless you explicitly use Worker Threads or offload to specialized services), it shines in real-time applications, REST/GraphQL APIs, streaming platforms, and collaborative tools.

Conclusion

Node.js proves that high concurrency does not require multi-threaded complexity. By combining a single-threaded event loop, non-blocking I/O, and intelligent background delegation, it delivers remarkable performance and predictable scalability. Understanding how the event loop orchestrates requests and how tasks are offloaded behind the scenes empowers you to write efficient, production-ready applications. As you continue building with Node.js, keep the event loop in mind, avoid blocking synchronous calls, and leverage background workers when heavy lifting is required. The result? Fast, scalable, and resilient applications that handle real-world traffic with ease.